Gosh – such a long time since I posted! Too much stuff happening. I just completed a major refactoring of the pdf output possibilities for Andika!, adding lots of options that you can use to tweak how the Swahili text gets printed. All the details are in Chapter 8 of the revised manual.

Archive for the ‘Swahili’ category

New output options for Andika!

December 1st, 2017New Swahili materials

January 9th, 2016For much of 2015, I was working on Swahili. The main effort has been on Andika!, a set of tools to allow handling of Swahili in Arabic script. I spoke on this at a seminar in Hamburg in April 2015 (thanks to Ridder Samsom for the invite!), and since then my top priority has been to use Andika! for the real-world task of providing a digital version of Swahili poetry in Arabic script. So I started working on two manuscripts of the hitherto-unpublished Utenzi wa Jaʿfar (The Ballad of Jaʿfar), adding pieces to Andika! as required, and I finished that last summer. Although there are still a few rough edges, I think it’s worth putting it up on the web.

Since then, I’ve been working on a paper to demonstrate how having a ballad text in a database might help in textually analysing it, and the results so far are very interesting. For instance, half of the stanzas use just 3 rhymes, one in five verbs are speech verbs, the majority of verbs (67%) have no specific time reference, 70% of words in rhyming positions are Arabic-derived, 83% of lines have two syntactic slots, and in the four commonest sequences display marked word-order almost as frequently as the “normal” unmarked word-order. I’m currently looking at repetition and formula use, and there the ability to query the database to bring up similar word-sequences is crucial. Another benefit has been that concordances (based either on word or root) can be created very easily.

Ideally, you could load all your classical Swahili manuscripts into a big database, and this would give you an overview of spelling variations and word usage over time and space, with all this text available to be queried easily and printed in either Arabic and Roman script (or both), with a full textual apparatus if desired.

For conclusions based on a poetic corpus to be valid, they would also have to be compared with a prose corpus, and I’m also offering a small contribution to that, with a new Swahili corpus based on Wikipedia. This contains about 2.8m words in 150,000 sentences, so it’s reasonably comprehensive. In contrast to the work I’ve done on Welsh corpora, I used the NLTK tokenizer to split the sentences, and I’m not entirely sure yet whether I like the results as much as the hand-rolled splitting code I was using earlier. Something to come back to, possibly.

Andika!

November 8th, 2012After 3 months of work, I’m pleased to have completed Andika!, a set of tools to allow Swahili to be written in Arabic script, with converters to turn this into Roman script and vice versa. Not least, it provides a way to digitise Swahili manuscripts so that they can be printed attractively, and so that their contents are available for research using computational linguistic methods. More details are on the website.

Keyboard Layout Editor

August 20th, 2012I’m revising my Swahili-Arabic keyboard layout, and was looking for something that allows me to see what’s on each key, because it’s difficult trying to remember where everything is when you’re making revisions every 5 minutes.

I found Simos Xenitellis’ Keyboard Layout Editor here, with the code here. To install on Ubuntu 12.04, you need Java installed. The simplest way of getting Oracle (Sun) Java (I find OpenJDK still has a few issues with some applications) is to install Andrei Alin’s PPA:

sudo add-apt-repository ppa:webupd8team/java

sudo apt-get update

sudo apt-get install oracle-java7-installer

Next, install python-lxml and python-antlr.

Then download the KLE tarball from Github, untar it, and move into the directory. Download the ANTLR package:

wget http://antlr.org/download/antlr-3.1.2.jar and process the ANTLR grammars:

export CLASSPATH=$CLASSPATH:antlr-3.1.2.jar

java org.antlr.Tool *.g

Then launch KLE:

./KeyboardLayoutEditor

With the blog post above, the interface is quite simple to get to grips with – you just drag your desired character into the appropriate slot on the key. The “Start Character Map” button does not launch the GNOME character chooser unless you edit line 951 near the end of the file KeyboardLayoutEditor from:

os.system(Common.gucharmapapp) to

os.system(Common.gucharmappath) but the character chooser doesn’t seem to want to change fonts. So instead I opened the KDE kcharselect app manually, and dragged the characters from that.

In general, KLE is a nice app, but perhaps needs a bit of a springclean. Things that struck me were:

- You can only drag and drop the character glyph – you can’t edit it once in place on the key, you can only remove it. So you can’t, say, right-click and change 062D to 062E if you’ve made a mistake.

- Likewise, you can’t drag characters between the slots on a key to move them around – you have to remove them, and then drag them back in from scratch.

- The final file is a little untidy – it doesn’t use tabs to separate the columns of characters, or a blank line between the row-groups (AB to AE) of the keyboard.

- If you’re using the most likely course of having two modifiers (Shift and AltGr), the written file is missing the line:

include "level3(ralt_switch)"without which the AltGr options will not work. So you need to add that manually.

But all in all, Simos has written a very handy application that greatly simplifies designing a keyboard layout, so a big thank-you to him for making my work a good bit easier!

Swahili segmenter now online

May 27th, 2010At the weekend I finally managed to get the segmenter tidy enough to release. The web version is here, and the code is available for download from a Git repository. This also includes a pretty detailed manual on how to get it working from scratch on an Ubuntu machine.

Beata has found a couple of minor problems so far, and I’ll fix these when I get some other stuff finished. More testing and comments would be very welcome.

A Swahili verb analyser

April 10th, 2010Many years ago I studied Bantu languages, and I’ve recently returned again to perhaps the best-known of them, Swahili. On the FreeDict list, Piotr Bański and Jimmy O’Regan had noted the absence of a free (GPL) morphological analyser for Swahili – some have been written, but they are not available under a free license. I have now completed a free (GPL) analyser for Swahili verbs (analysing the nouns is relatively easy), and my hope is that it might not be all that difficult to port to other Bantu languages like Shona or Zulu.

My first idea was to write a generating conjugator like the one I did for Welsh, where I would set up rules and forms in a database, and then scripts would stick all these together. This would have been much easier to do for a Bantu language than for an inflected language like Welsh. Even though many of the forms would have been semantically dubious, even if morphologically possible, that would not have mattered, since they could just sit quietly in the database, offending no-one. The main drawback would be that as new verbs were added to the dictionary, the relevant forms would have to be generated, but that could be done by a script.

So I took the first steps of generating forms for the current (-na-) tense, adding subject and object pronouns for all the classes. Hmm. A first run-through yields about 400 forms for -ambia (say,tell). And it turns out that of these 400 “possible” forms, only 15 occur in Kevin Scannell’s 5m-word Crubadán Swahili corpus. Worrying – let’s (roughly) do the maths: 20 subject prefixes x 20 object prefixes x 25 tenses x 20 relatives x (say) 2,000 verbs (initially) = 400m entries! Not really practical, I think …

So instead I’ve written something which segments and tags the verbal form given to it. Type in anawaambia (he/she is telling you/them), for instance, and you get:

a[sp1-3s]+na[curr]+wa[op2-2p,op2-3p]+ambia (tell)

or for alivyompiga (how he hit him):

a[sp1-3s]+li[past]+vyo[rel8]+m[op1-3s]+piga (hit)

where rel8 is “relative particle of class 8”, sp1-3s is “subject pronoun of class 1, third person singular”, curr is “current present tense”, and so on. This has the big benefit that you don’t need to generate the forms beforehand and add them to the dictionary. Of course, you won’t get a verb lemma until you put an entry for it into the dictionary!

At the moment, the system is working in a console, which is useful for debugging, but I’ll add a web interface for it. The aspects I’m focussing on now are a disambiguator and a rudimentary grammar checker.

The disambiguator is a bit like constraint grammar, but working on morpheme tags inside the word instead of across word boundaries, and of course it’s using PHP regexes working on a database entry instead of C++! This is helping to tighten up the analysis – in fact, as I was parsing an example to put in here, I realised several rules could be conflated by changing just a couple of things, so I’ll use a simpler example: with singejua (I would not know), the entry for the negative imperative marker is removed to leave the (correct) negative first person singular subject pronoun, so:

si[neg+sp1-1s,neg-imp]+nge[cond]+jua (know)

becomes:

si[neg+sp1-1s]+nge[cond]+jua (know)

The checker is due to the fact that the analyser trusts you to put in correct forms – it will analyse incorrect forms as best it can. It would be difficult, on this implementation model, to completely rule out all incorrect forms (although the original generator model would have done this), but it is feasible to flag the most obvious, and this is what I will be doing. For instance, if you enter *hawasingejua (*they would not know), you get the following:

Incorrect: There are two negatives here. Either remove the initial

negative ‘ha-‘ (corrected below), or use ‘nge’ instead of ‘singe’.

wa[sp2-3p]+singe[neg-cond]+jua (know)

with a correct form (wasingejua) offered instead. I’m not sure yet how best to cater for this in command-line mode (in cases where the analyser would be being used without supervision to tag text in bulk).

As regards verbal extensions (where –pika (cook) can produce -pikwa, -pikia, -pikiwa, -pikisha, -pikika, and so on), these are not handled directly in the analyser. The main reasons for this are (a) they are less productive (of the 8 or so main extensions, many verbs may have only a few in common use) and (b) the morphology is more variable (often depending on the source of the verb), which makes analysis more complicated. So my current plans are to handle them in a revised version of Beata Wójtowicz’ FreeDict Swahili dictionary, with the extensions being marked in the verb entry (eg –pikisha, v, caus) along with the root of the verb (so that other extensions of the same root will come up in any search). This means that nilipikiwa (I had something cooked for me) might show up in the analyser as something like:

ni[sp1-1s]+li[past]+PIK+prep+pass (have something cooked for one)

meaning that the prepositional and passive extensions are marked in the analysis.

There are undoubtedly many shortcomings in this first version of the analyser, but at least the code (such as it is) will be out there for people to comment on and amend. It may be that it can be rewritten in a compiled language to make it faster, or that the existing constraint grammar engine can be included to make the disambiguation more flexible. Since it’s less than 500 lines of PHP, it should be easy to get to grips with.

Font-face …?

April 2nd, 2010أَلِپٗپٖنْدَ مَنَانِ · كَمُؤٗنَ مُعَيَنِ

كُنَ كِسِمَ مْوِٹُنِ · أَكٖنْدَ كُچَنْڠَلِيَ

alipopenda manani ✽ kamuona mu’ayani

kuna kisima mwiţuni ✽ akenda kuchangaliya

Swahili layout now part of xkb

April 2nd, 2010Thanks to fast work by Sergey Udaltsov, the proposed keyboard layout for Swahili in Arabic script is now in the xkeyboard git tree. This means that at some point in the near future you will not have to edit your xkb files manually, as in the howto. All you will need to do is choose the new Tanzania or Kenya layouts on your keyboard switcher, and you will get the Arabic script layout automatically. Having this available as a default in all GNU/Linux distros should make it a lot esier to get started.

Swahili in Arabic script: a howto

March 27th, 2010I’ve written up what I did to set up my machine to write Swahili in Arabic script, and the result is contained in this howto document. The keyboard layout file listed in Annex 1 can be downloaded here. Any corrections or additions are welcome.

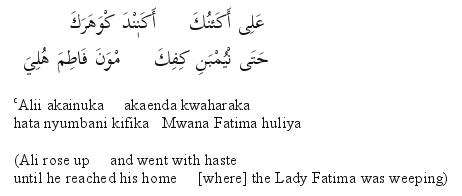

I give another example there of a transcription from the Jaafari manuscript:

What has actually surprised me is that it is almost as fast to type Swahili in Arabic script as it is in Roman, and it takes much less time than it would to add all the diacritics to make a proper transliteration of the manuscript.

I’m not sure whether this has already been done for other African languages that have used Arabic script in the past (eg Hausa), but it seems a useful way of using modern technology to help safeguard an important area of cultural heritage.

Swahili in Arabic script on WordPress

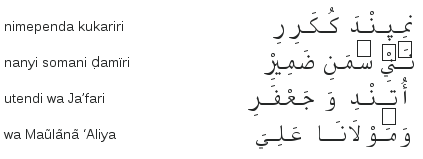

March 26th, 2010It’s possible to get Swahili in Arabic script to display quite nicely in WordPress on Linux (Kubuntu 9.10) Firefox (3.5.7), after a bit of tweaking. Here is the last stanza of the Utendi wa Ja’afari:

نَنْيِ سٗمَنِ ضَمِيْرِ nanyi somani ḍamïri

أُتٖنْدِ وَ جَعْفَرِ utendi wa Ja’fari

وَمَوْلاَنَا عَلِيَ wa Maũlãnã ‘Aliya

You need to have the SIL Scheherazade font installed to see it to best advantage. It should look like this:

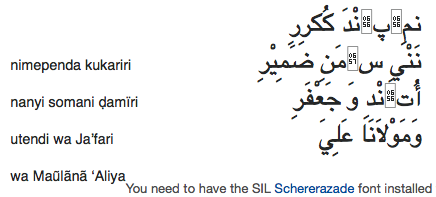

The default fonts on Linux seem to be missing glyphs for 067E (peh) in serif or non-serif fonts, and the glyph for 06A0 (ain with three dots above) doesn’t show up in a medial form. (That makes typing into the edit box on WordPress a little more difficult than it need be, but it’s not a show-stopper.) Even with Scheherazade installed, Konqueror (4.3.2) produces a bit of a mess unless I set the fallback font in the stylesheet to monospace (see below). Even then, it doesn’t see the glyph for 0656 (subscript alef) which I’m using for the vowel e (in the absence of a vertical equivalent of 0650, kasra) or 0657 (inverted damma) for the vowel o. That means it puts a box there:

An older version of Konqueror (3.5.5) on openSUSE 10.2 puts the boxes inline, and this leads to medials not being joined.

On Apple Mac OSX (10.4.11) with Scheherazade installed, Safari (3.1.1) behaves similarly to the older version of Konqueror on Linux (ie boxes instead of e, and medials unjoined). Firefox (3.0.17) is roughly the same, but it doesn’t even seem to see the Scheherezade font, so it falls back to monospace and gets the alignment of the <span> (see below) wrong:

I have no Microsoft Windows machine to test this on.

The default setup produces Arabic script that is too minuscule for my old eyes (I have the same problem with Chinese!), so I’ve added a couple of CSS stanzas to the theme stylesheet to adjust positioning and size:

font-family: Scheherazade, monospace;

font-size: 220%;

line-height: 130%;

direction:rtl;

unicode-bidi: embed;

text-align: right;

width: 80%;

}

.trlit {

font-family: “Liberation Sans”, sans-serif;

font-size: 50%;

margin-left: 50px;

text-align: left;

float:left;

}

The first handles the Swahili, and the second handles the transliteration.

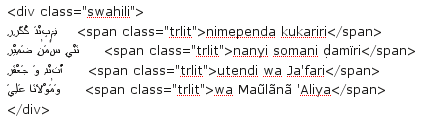

Then the actual Swahili in the post looks like this:

I’m sure all of this (especially the CSS) could be done more elegantly, but it’s a start. There are many things I still have to figure out, though. My next post will be on setting up your PC to type Swahili in Arabic script.